The infinite monkey and the Rubik's cube: random walk vs God's Number

A while back I wanted to better understand the math behind the Rubik's cube, so I built a project that basically runs in an infinite loop: a scrambled cube making random moves until, at some point, it returns to the solved state. The premise is the classic infinite monkey theorem: given enough time, any improbable event happens.

I dropped the iframe into the blog too. You can leave it open in a tab and come back in a few... billion years to see if it worked out.

The funny thing is, to anyone glancing at the cube from a distance, it kind of feels like "it should solve itself eventually, right?". After all, it's just 6 faces, 9 little squares each. How hard can it be?

The answer involves graph theory, pseudo-random number generation, the lifetime of the universe, and a number that, honestly, I can't even properly visualize.

The number nobody can visualize

The 3x3x3 cube has 43,252,003,274,489,856,000 possible states. If you want to feel that number, it's roughly:

43 quintillion.

6,000 times the estimated number of grains of sand on all the beaches on Earth.

If each state were one millisecond, you'd need 1.4 billion years to count all of them.

The formula that gives you this number is (8! × 3⁷) × (12! × 2¹¹) / 2. The first two terms are the corner permutations and orientations, the next two are the edges, and the division by 2 is because half of the configurations are physically unreachable (you can't get to them just by twisting; you'd have to disassemble the cube).

Those 43 quintillion are the size of the cube's state space. And that's where the random walk game starts to get nasty.

The first irony: algorithms always come home

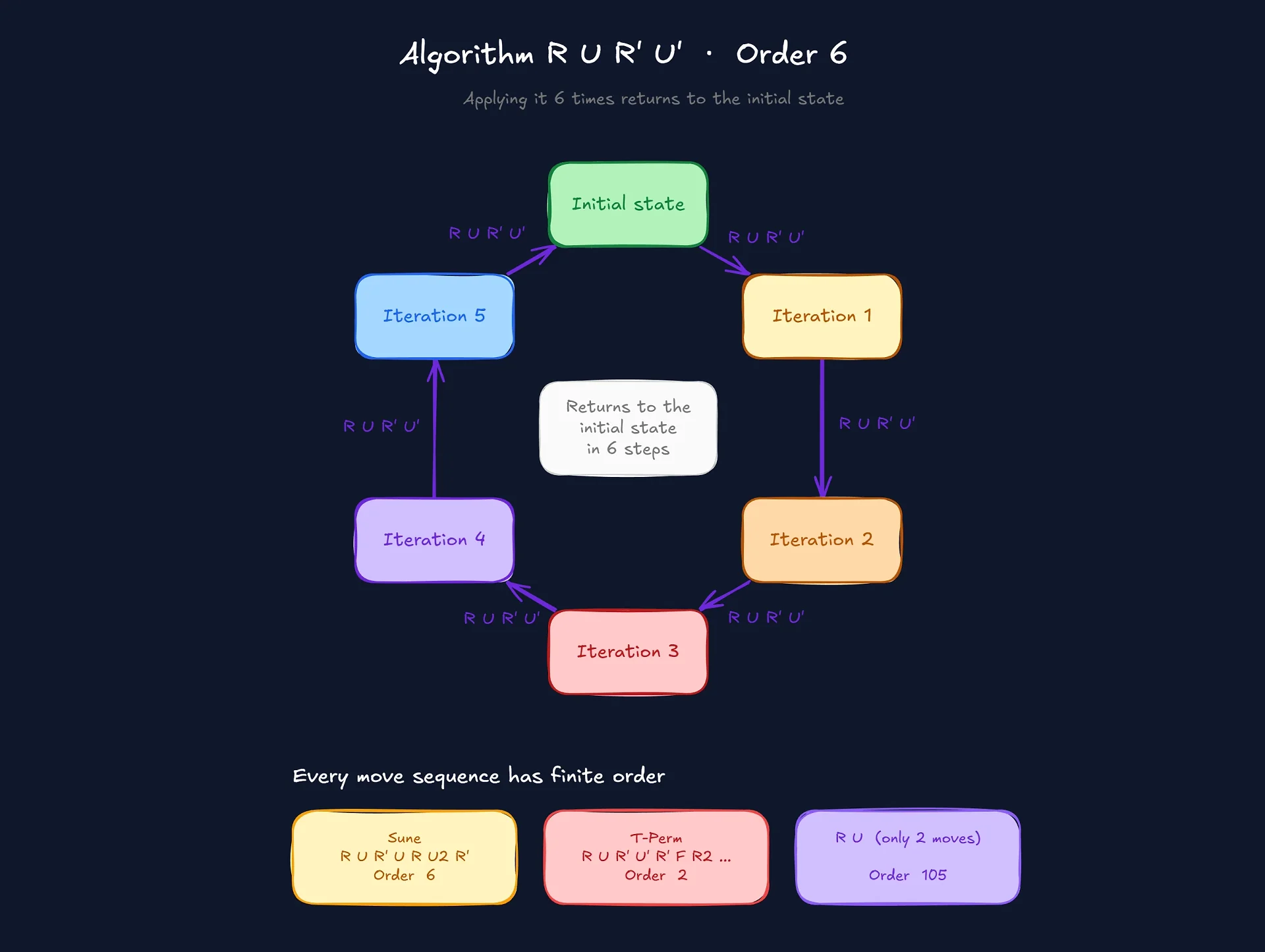

Before talking about the random walk, there's something that seems obvious but isn't: every cube algorithm, repeated infinitely, eventually returns to the initial state.

Take the famous "sexy move", the R U R' U' that everyone learning to solve a cube memorizes first. Repeat it 6 times and the cube goes back to exactly how it started. The "order" of this algorithm is 6.

Other classic examples:

T-Perm (

R U R' U' R' F R2 U' R' U' R U R' F'): order 2. Apply it twice and you're back.Sune (

R U R' U R U2 R'): order 6.Y-Perm: order 2.

Even the simplest possible sequence, R U (just two moves), has order 105. Repeat R U one hundred and five times in a row and the cube returns to its original state. This is counterintuitive, but it's an inevitable property of any finite group: every sequence of operations has a finite order.

The intuition here is simple: the cube is a finite group. The set of all possible states is closed. If you keep applying the same transformation, you'll eventually land on a state you've already visited, and from there everything repeats. This is Lagrange's theorem applied to a toy.

And it's along this line of reasoning that a lot of people make the mistake of thinking: "ah, so a random walk will also return to the solved state at some point". It will. The problem is the "at some point".

The cube is a graph (and that changes everything)

Here's where the post gets interesting for programmers.

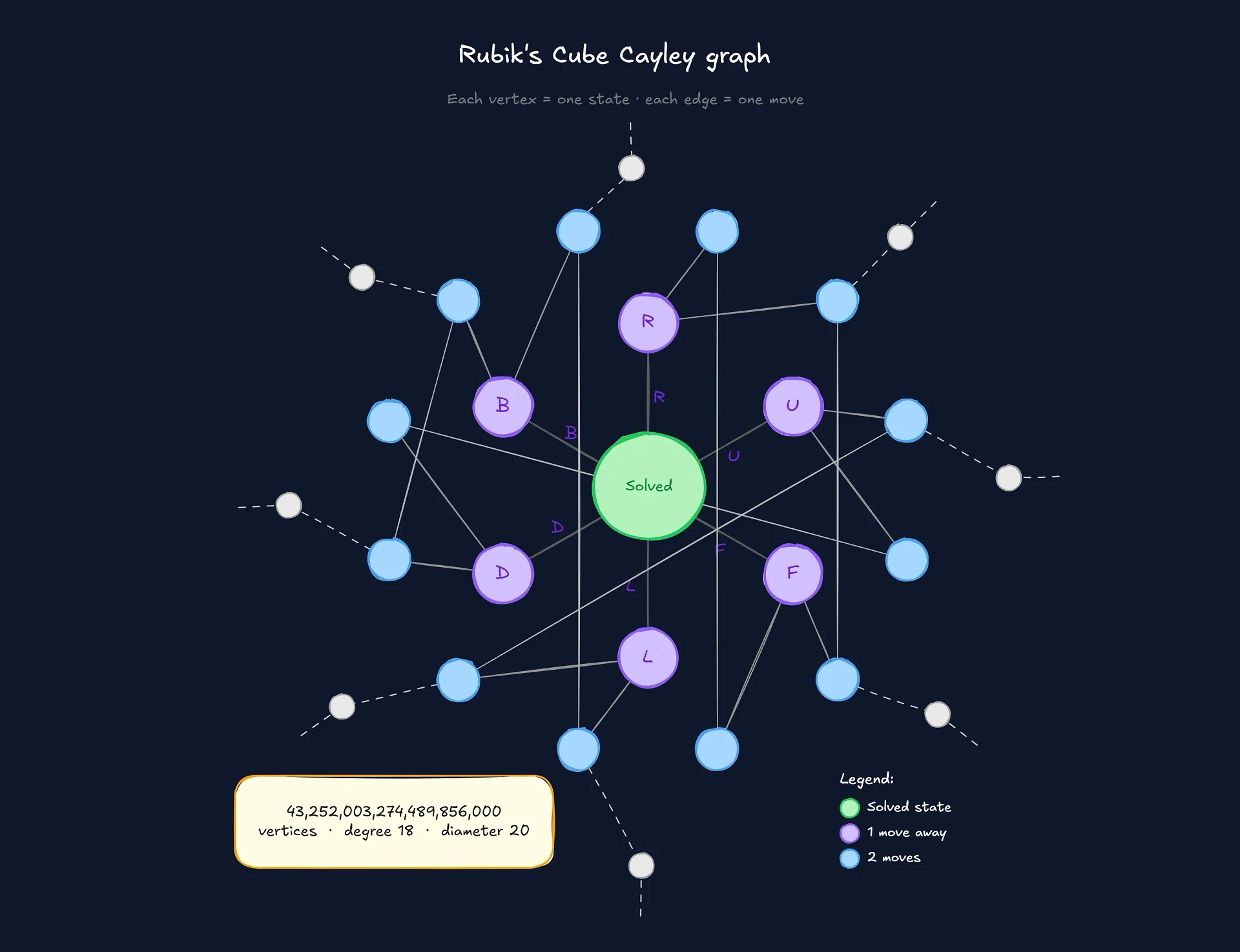

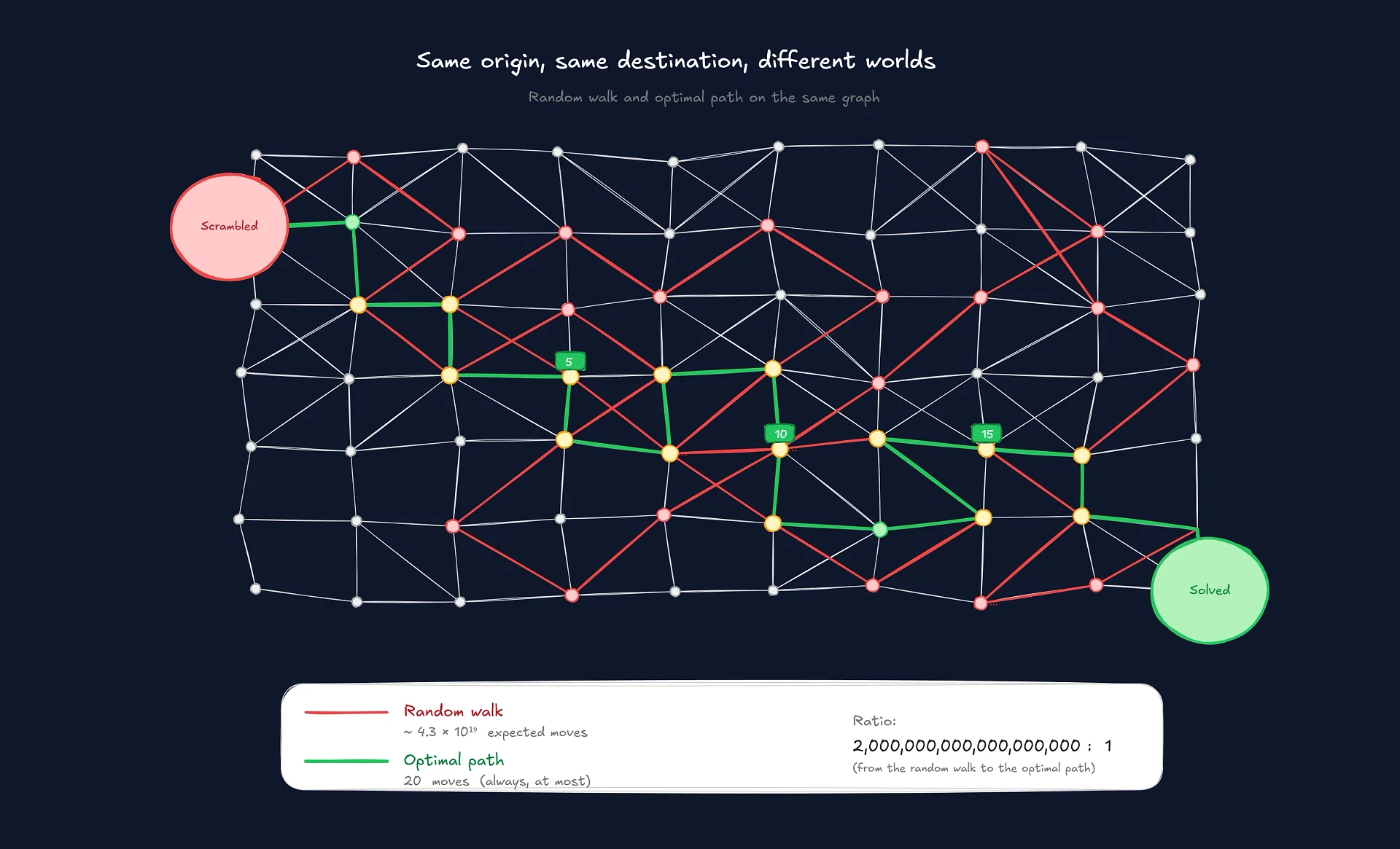

Picture the cube not as a physical object, but as a data structure. Each possible state is a vertex in a graph. Each move is an edge connecting one state to another. This graph has a name: the Cayley graph of the cube group.

Let's count:

Vertices: 43,252,003,274,489,856,000 (the states).

Edges per vertex: 18 (6 faces, and each face allows 3 moves: 90°, 180°, 270°).

Total edges: ~3.9 × 10²⁰.

This completely changes the problem. Now solving the cube stops being a matter of "twisting until it works" and becomes a classic graph search problem: finding the shortest path from the current vertex to the solved vertex.

The keyword here is "shortest", and that's where things get absurd.

God's Number

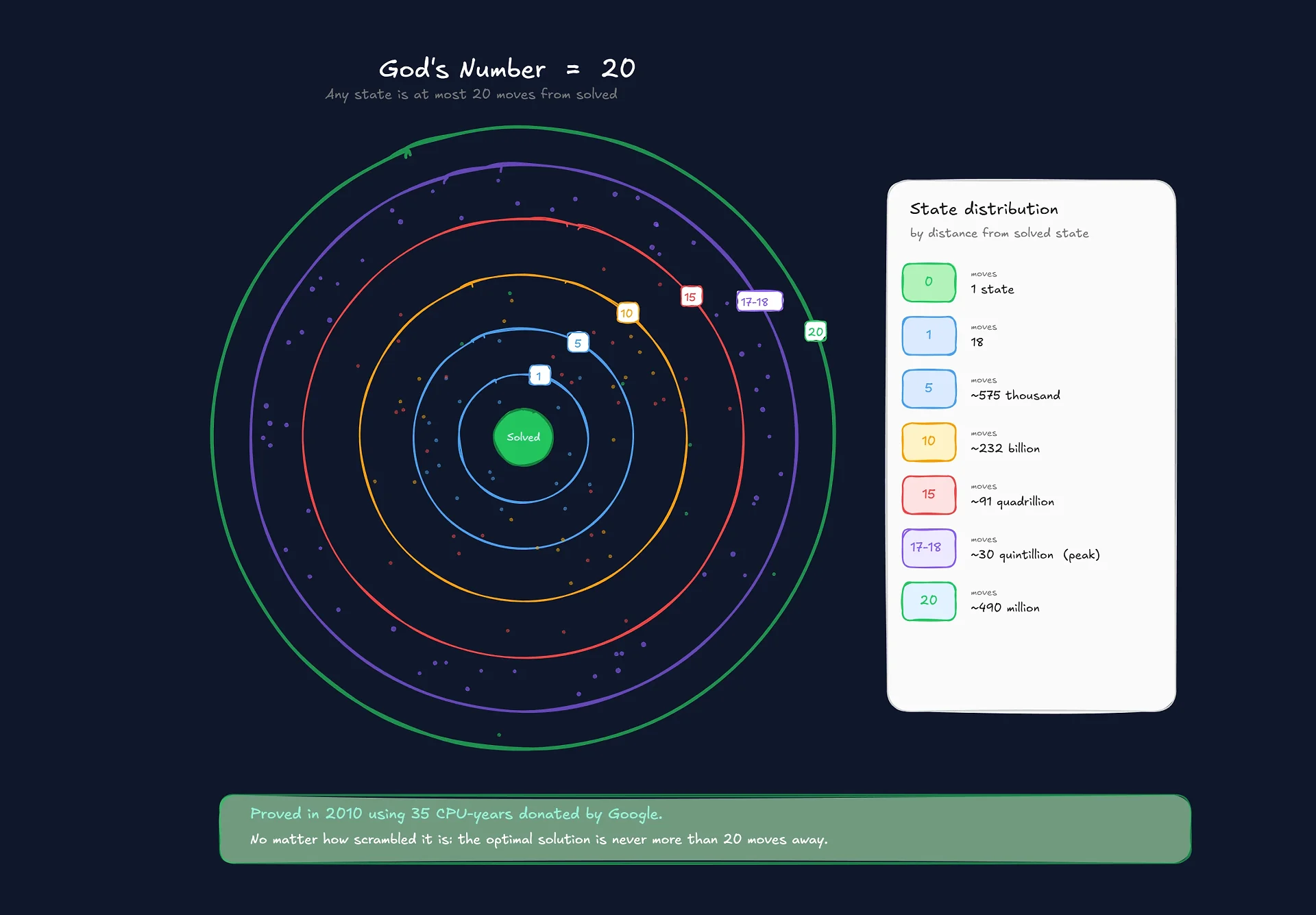

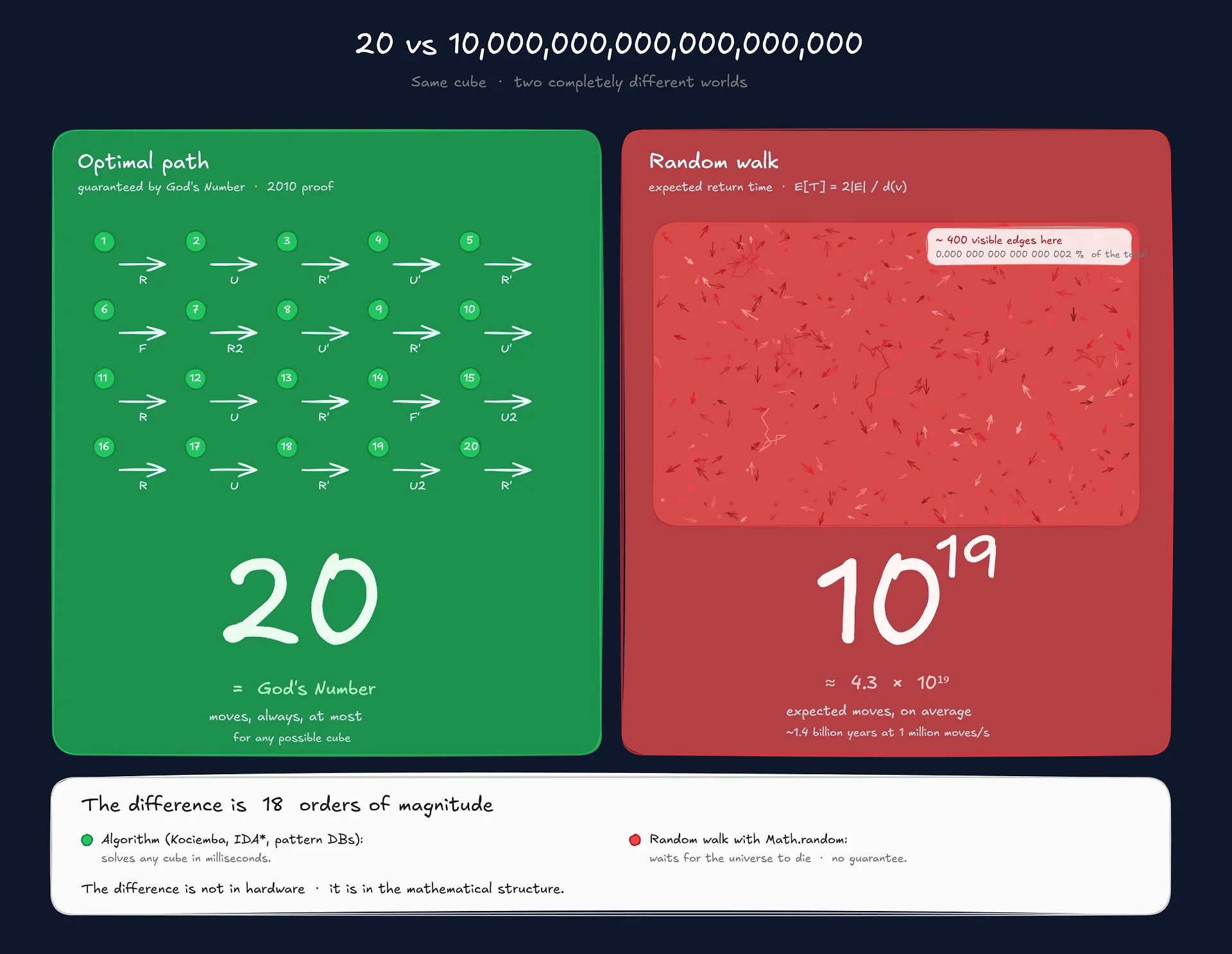

In 2010, after 35 CPU-years running on servers donated by Google, a team of mathematicians proved a result that had been open for decades: the diameter of the cube's Cayley graph is 20.

In English: starting from any of the 43 quintillion possible states, there is a sequence of at most 20 moves that solves the cube. This number 20 became known as God's Number, because it's the minimum number of moves an omniscient entity would need to solve any cube.

It doesn't matter how scrambled your cube is. It doesn't matter if you spent 3 hours scrambling it with all your might. The optimal solution is never more than 20 moves away.

And here lies the first cruel irony: while the optimal solution is always at most 20 moves away, the random walk doesn't care about that. It'll take paths hundreds, thousands, millions of moves long, dancing around the solution over and over without ever stumbling into it by chance.

Finding that 20-step minimum path is another problem entirely. Traditional BFS would need to load a sizable chunk of the state space into memory, which is unfeasible (43 quintillion × ~20 bytes per state = ~900 exabytes). That's why modern solvers like Kociemba's algorithm use precomputed tables (pattern databases) and heuristic search with IDA*. But that's a topic for another post.

The math of the random walk

Let's get to the heart of the post: why is the random walk so bad?

There's a classic formula in graph theory for the expected return time of a random walk on a connected undirected graph. To return to a vertex v:

E[return time to v] = 2|E| / d(v)Where |E| is the number of edges and d(v) is the vertex's degree. For our cube:

E[return to solved] = 2 × (3.9 × 10²⁰) / 18 ≈ 4.3 × 10¹⁹ movesIn other words, on average, a random walk takes about 43 quintillion moves to return to the solved state. Which is, not coincidentally, the same number of states the cube has. This happens because the graph is regular (every vertex has degree 18), so the random walk becomes uniformly distributed across all states in the long run.

Let's convert this into time:

Speed | Expected time to solve |

|---|---|

10 moves/s (human) | ~136 billion years |

100 moves/s (animation) | ~13.6 billion years |

1,000 moves/s (script) | ~1.36 billion years |

1 million moves/s (optimized) | ~1.36 million years |

1 billion moves/s (low-level C++) | ~1,360 years |

The universe is ~13.8 billion years old. If you'd been running the random walk at 100 moves per second since the Big Bang, it probably would have solved by now. Probably. No guarantee, because that's the mathematical expectation, not an upper bound.

This is the essence of the second irony: the solution is always 20 steps away, but the random walk passes right next to it trillions of times without ever falling in.

The pseudo-randomness trap

OK, but all of this assumes that our script's "random" is... random. And here's where the part that matters for programmers comes in.

Computers are deterministic machines. They don't know how to be random. What we call random() in any modern language is actually a PRNG (Pseudo-Random Number Generator): an algorithm that produces sequences that look random but are entirely predictable if you know the internal state.

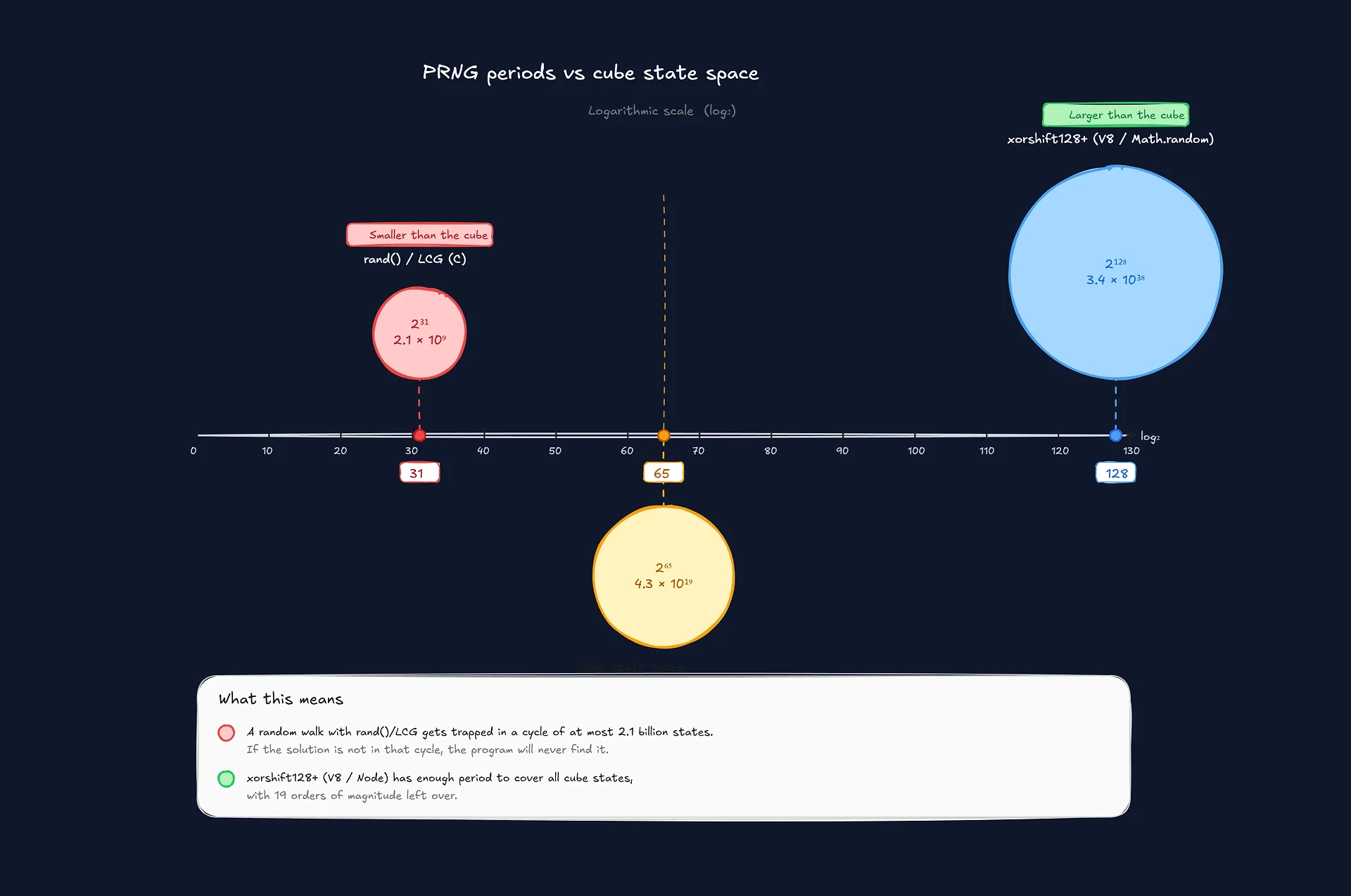

JavaScript's Math.random() in V8 (Chrome, Node) currently uses xorshift128+, which has a period of 2¹²⁸ - 1, or ~3.4 × 10³⁸. That's much larger than the cube's 4.3 × 10¹⁹ states, so in theory it can produce sequences long enough to cover the state space.

// My project's loop, simplified:

const moves = ["R", "R'", "U", "U'", "F", "F'",

"L", "L'", "D", "D'", "B", "B'",

"R2", "U2", "F2", "L2", "D2", "B2"];

while (!cube.isSolved()) {

const move = moves[Math.floor(Math.random() * moves.length)];

cube.apply(move);

}But hold on. There are three hidden traps here that change the game:

1. The seed predetermines everything.

A PRNG is a function f(state) → (next number, next state). If you fix the initial seed, the entire sequence of "random moves" your cube makes is completely predetermined. There's no real randomness. There's a fixed sequence that anyone with the seed could reproduce move by move.

In security this is a serious problem (which is why cryptographic PRNGs like crypto.getRandomValues() exist). For the cube it's just a philosophical curiosity: the infinite monkey in my project isn't actually "trying" anything; it's executing a fixed path through the graph.

2. Not every PRNG has enough period.

xorshift128+ is decent. But if you were to implement this in C using libc's rand() (which is still a Linear Congruential Generator), the typical period is only 2³¹ - 1, or ~2 billion. That's 20 billion times smaller than the cube's state space.

The result: your C program would never be able to visit most of the states. It would enter a deterministic cycle of at most 2 billion configurations, and if the solution wasn't in that cycle, the program would run forever, literally.

3. Randomness ≠ uniformity.

Even with a PRNG of enormous period, you have no guarantee of uniform distribution across the 43 quintillion states. PRNGs like xorshift are good, but they fail advanced statistical tests (BigCrush, PractRand). For the cube this means some states might get visited more than others, which biases the cover time in practice.

And there's a detail few people remember: the concept of "random walk" assumes independence between each move. But the cube group has relations between its generators (R · R' = identity, R² · R² = identity, etc). A naive random walk wastes a considerable percentage of moves on redundant inversions. If you implement a smart random walk that avoids applying R right after R', you reduce the effective move space from 18 to about 15, but you still don't come anywhere close to what a BFS does.

What it all means

The project's goal was to visualize the infinite monkey theorem. But what it actually shows, in practice, are three things:

The first is the absurd size of the cube's state space. 43 quintillion sounds like a big number, but it only makes sense when you compare it to the speed of a modern computer and realize that even iterating billions of times per second you still need centuries.

The second is the difference between a structured algorithm and brute force. Kociemba solves any cube in milliseconds. The random walk would solve it in billions of years. The difference isn't hardware, it's mathematical structure. When you understand the problem, you attack the graph with IDA* and pattern databases. When you don't, you wait for the universe to die.

The third, and this is the one that interests me most as a dev, is that randomness in computing is a useful fiction. We code as if Math.random() were truly random, but it's just a deterministic sequence that looks random to human eyes. For small problems this doesn't matter. For problems the size of the Rubik's cube, it brushes up against the theoretical limit of what deterministic computation can do.

Final thoughts

The infinite monkey is probably never going to solve your Rubik's cube. The math says it will, on average, after enough time to extinguish several civilizations. Practical computing says it'll probably enter some deterministic PRNG cycle and just keep spinning there forever.

But the monkey keeps trying. And there's something poetic about that: a process that's mathematically guaranteed to work but computationally impossible to test.

If you want to leave your own monkey running while you do something else, just open the iframe below (or go to https://live.junyo.dev/rubikcube/ directly). I left it running on my secondary monitor for a few days while writing this post. The cube didn't get solved. But I think it must be getting close.

By the way, if you want to know the exact probability of the cube being solved after N moves: it's approximately N/(4.3 × 10¹⁹). After one million moves, the chance is about 0.0000000000023%. Good luck.

Comentários

0Nenhum comentário ainda. Seja o primeiro!